When a resident says “my Wi-Fi is down,” they’re really saying “my life is on pause.” In multifamily broadband, that pressure lands on the NOC, even when the fault is a power blip in one building, a bad ONT, or a noisy upstream.

AI network operations can help, but only when it’s built like a safety system, not a magic button. The best wins come from assistive workflows: faster ticket handling, earlier outage signals, and better clues for first responders, with humans still in charge.

This guide focuses on practical patterns that fit how MDU providers actually work: mixed vendors, imperfect topology, noisy telemetry, and tight response targets.

What “AI-assisted” looks like in a real multifamily NOC

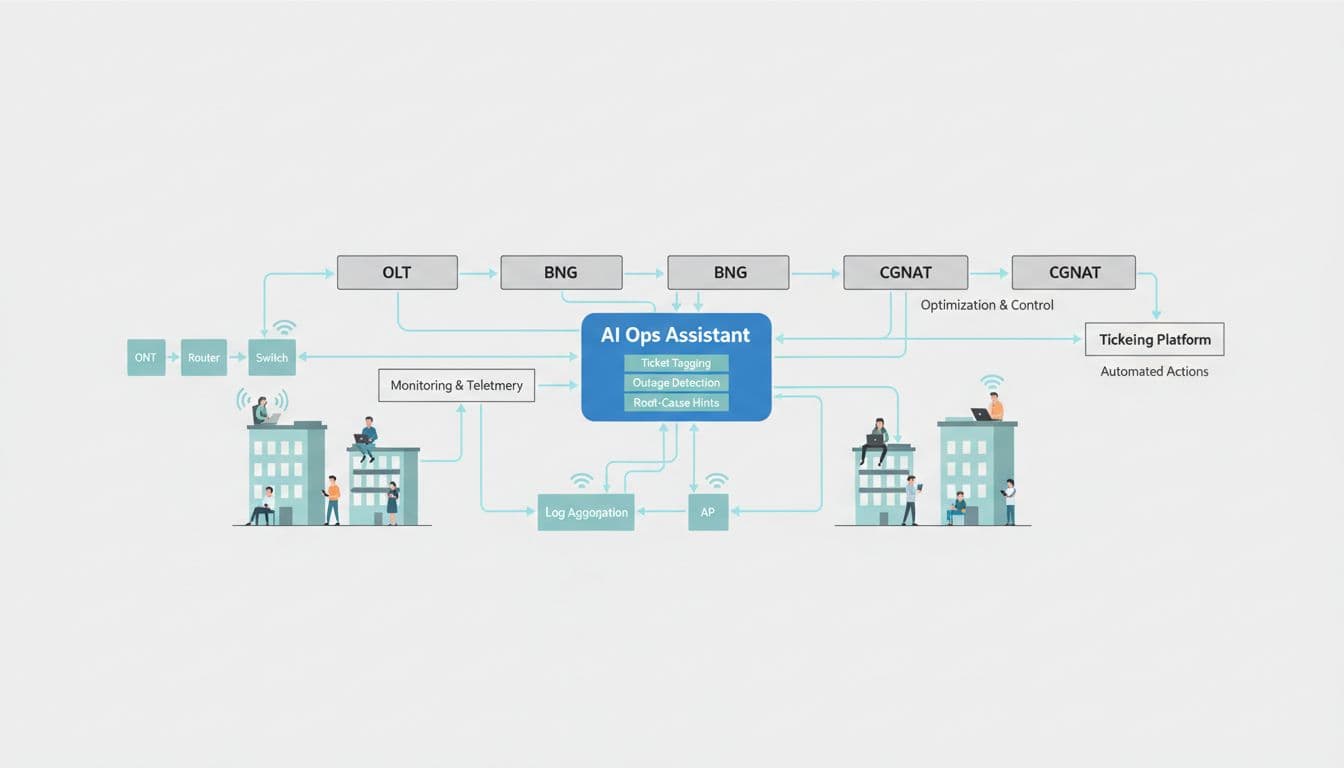

Think of an AI ops assistant as a smart layer between your monitoring stack and your ticketing system. It watches the same events your team already sees, then suggests the next best “ops move,” with receipts.

A practical reference architecture has five parts:

- Signals in: SNMP/streaming telemetry, syslog, RADIUS/AAA, DHCP leases, ONT/OLT stats, Wi-Fi controller events, CPE health checks, synthetic tests, and ticket history.

- Normalization and context: a lightweight inventory (site, building, unit, circuit IDs), plus topology hints (OLT port to splitter to ONT, AP to switchport). This can start as a best-effort map and improve over time.

- Decision layer: rules plus ML/LLMs. Rules handle known truths (“if OLT port down, don’t blame Wi-Fi”). ML handles anomaly detection and clustering. LLMs help with text, like summarizing ticket notes and suggesting tags.

- Guardrails: confidence scores, human-in-the-loop approval, audit logs, and fallback behavior.

- Outputs: tags, priority suggestions, outage banners, and root-cause hints pushed into your ticketing and alert tools.

Vendor platforms already push in this direction. For a property-focused view of AI features in MDU Wi-Fi, see RUCKUS’s guide to smarter MDU networks with AI. Even if you don’t run that stack, it’s a helpful checklist of what “assistive ops” should feel like.

Ticket tagging that saves time without breaking trust

Ticket tagging sounds small until you measure it. In multifamily, a high share of effort is not “fixing,” it’s sorting: What type of issue is this, who owns it, and how urgent is it?

A strong tagging workflow combines deterministic rules with LLM help:

A safe tagging workflow (rules first, LLM second)

- Extract structured clues (no AI yet): site ID, building, unit (if present), CPE MAC, ONT serial, AP name, last-online, RSSI/SNR, upstream/downstream errors, recent change tickets.

- Apply hard rules:

- If device is offline and last-online < 5 minutes, tag

transient, set priority low. - If ONT is offline and OLT port is down, tag

access-fiber, set ownernetwork. - If resident device count is zero but AP is healthy, tag

resident-device, set ownersupport.

- If device is offline and last-online < 5 minutes, tag

- Use an LLM for text classification and summary:

- Input: ticket subject, chat notes, last 20 log lines, plus the structured clues.

- Output: suggested category, top 3 tags, and a 2 to 3 sentence summary.

- Require confidence gates:

- At 0.90+, auto-apply tags but keep a visible “AI-applied” marker.

- At 0.60 to 0.89, show suggestions, require one-click approval.

- Below 0.60, do nothing, only show a “possible matches” panel.

This setup reduces noise while keeping operators in control. It also creates training data naturally: every accepted or corrected tag becomes a labeled example.

If you want inspiration for how NOCs think about automation in ticket flows, this practitioner write-up, AI and automation for access network ticketing in NOCs, is a good reality check.

Outage detection and root-cause hints (with guardrails)

Outage detection and root-cause hints are where AI network operations can pay off fast, but they’re also where false positives can burn credibility. The trick is to treat AI outputs as “evidence,” not “truth.”

Outage detection that matches how MDUs fail

Outages in apartments often look like patterns, not single alarms: a cluster of units in one building drops, or one OLT PON goes unstable, or a switch stack causes “random” Wi-Fi complaints.

A practical detection approach:

- Baseline per location and per interface, not just network-wide averages.

- Combine three signal types: device telemetry (ports, optical levels), client experience (DHCP success, authentication failures), and ticket rate.

- Cluster by topology hint: building, MDF/IDF, OLT port, controller zone.

Simple pseudo-logic that works well:

- If “tickets per 10 minutes” jumps above baseline by X and at least Y% share the same building ID, raise “possible building outage.”

- If multiple ONTs on the same PON show rising retries or loss, raise “PON degradation” even if nothing is fully down.

- If the only change is ISP upstream loss, mark “upstream suspected” and suppress internal truck rolls.

For background on how anomaly detection is used in broadband ops, Nokia’s overview, smarter broadband anomaly detection with AI, maps well to this type of workflow.

Root-cause hints that help first responders

Root-cause analysis is often a time sink because the clues live in different places: alarms, logs, topology, and the last three “similar” incidents.

Root-cause hints work best when they’re framed like this: “Here are the top hypotheses, and here’s why.”

Common hint outputs in MDU networks:

- “Most affected units share OLT PON 3/1/7; optical power dropped across 14 ONTs.”

- “APs are up, but DHCP failures spiked; check DHCP relay on switch stack B.”

- “CGNAT pool utilization hit 95% before complaints; check subscriber sessions and pool sizing.”

Graph-based context helps here. If you’re exploring topology graphs or digital twins, this example, finding root-causes using a network digital twin graph, is a useful mental model even if you build a smaller version.

Use cases, data, and effort (quick planning table)

| Use case | Data needed | Tools to integrate | Effort level | Expected impact |

|---|---|---|---|---|

| Ticket tagging and summaries | Ticket text, categories, resolution codes, basic inventory/site mapping | ServiceNow/Jira/Zendesk, LLM API, log store | Low to medium | Faster triage, better reporting quality |

| Outage detection (building, PON, upstream) | Telemetry time series, topology hints, ticket rate, synthetic checks | NMS/observability (Prometheus, Datadog, Splunk), alerting, ticketing | Medium | Earlier detection, fewer duplicate tickets |

| Root-cause hints | Historical incidents, topology graph, correlated alarms/logs, change records | CMDB/inventory, log analytics, graph store (optional), LLM for summaries | Medium to high | Shorter MTTR, fewer misrouted dispatches |

Safety, privacy, and failure modes (don’t skip this)

- No automatic config changes without explicit approval. Start with “suggest and explain.”

- Audit logs for every AI output: inputs, model/version, confidence, and who accepted it.

- Resident privacy: minimize PII in prompts and storage. Prefer hashed identifiers for devices, keep unit numbers out unless required, set retention limits, and restrict access with least privilege.

- Known failure modes: noisy telemetry, incomplete topology, “ticket storms” from a single bad notification, and silent failures where devices look up but service is broken. Plan for all of them with suppressions, sanity checks, and clear operator override.

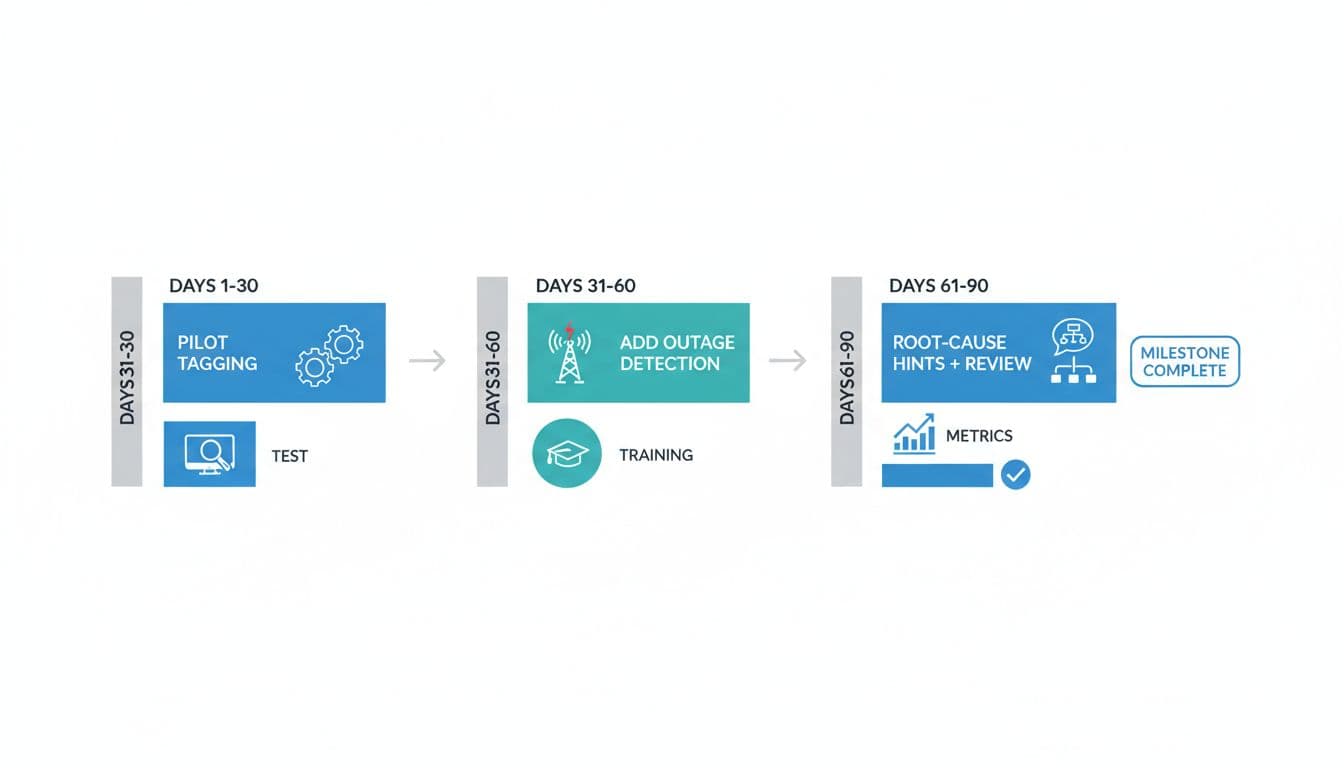

A simple 30/60/90-day rollout plan that won’t overwhelm the team

Days 1 to 30 (pilot ticket tagging): connect your ticket system, define a tagging taxonomy, add confidence gates, and run shadow mode for two weeks (suggestions only). Measure time-to-triage and tag accuracy.

Days 31 to 60 (add outage detection): pick two outage types (building-wide and PON degradation). Start with alert-only, then auto-create a “parent incident” ticket once precision is acceptable.

Days 61 to 90 (root-cause hints and review): build a small incident knowledge base from resolved tickets, add topology hints, and generate hypothesis lists with cited signals. Hold weekly review to tune thresholds and retire bad rules.

The best AI network operations programs in multifamily don’t try to replace operators. They reduce the busywork, surface patterns earlier, and put likely causes in front of the person who can act. Start with ticket tagging, earn trust with confidence gates and audit logs, then move toward outage detection and root-cause hints. The question to ask your team is simple: which step in today’s workflow wastes the most human attention, and can AI assist without taking unsafe control?

Let's talk multifamily tech.

I consult independently with apartment operators on managed Wi-Fi, smart building infrastructure, and technology strategy. If you're evaluating vendors, planning a deployment, or just need a second opinion — I'm happy to have a conversation.

Get in touch →No pitch. No obligation. Just a straight conversation.